1. Explicit activity or action

2. The roles and/or people being affected by the change

3. An outcome or effect as perceived by the people be impacted by the change

4. A constraint, such as a number of sessions, or a time. Where the experiment expires

Change agents should feel free to experiment with the exact format of an Improvement Experiment ticket, I tend to work with the following format:

-an action- -with/for- - a specific change participant- -will result in- -an outcome- -within some constraint-

an example could be:

"co-facilitated story mapping sessions with the business analysts and business subject matter experts will result in them feeling that they can effectively determine solution scope and structure after 3 supported sessions"

The activity is an explicit action that the change agent is going to execute in collaboration with change participants.

The specific change participant is simply the one or more of the change participants listed on the canvas that will be participating in the Improvement Experiment.

The outcome is the expected results, this can be in the form of improved capability, improved performance, or some other benefit. It's a good practice to write the outcome from the perspective of the change participants, how they perceive improvement is taking place (or not).

The constraint can be expressed in the form of a time period, i.e. "after two weeks" or a number of instances of a certain activity, i.e. "after three sessions". A constraint can also be specified as the occurrence of a specific event, iwhen the emergency defect occurs".

It's important to phrase validation from the perspective of the change participants, rather than using language that specifies achievement of some goal in a generic way. For example:

"developers will become TDD ninjas after three weeks of coding dojo's" isn't as good as "developers will indicate their mastery of TDD after three coding dojo's".

We want to structure our outcomes like this because it helps to enforce the idea that all validation of an Improvement experiment has to come from the change participant. It's not enough for a change agent to evaluate the impact of an improvement on change participants. Embedding language that hypothesizes how a change participant will indicate his reaction to a change encourages this mindset.

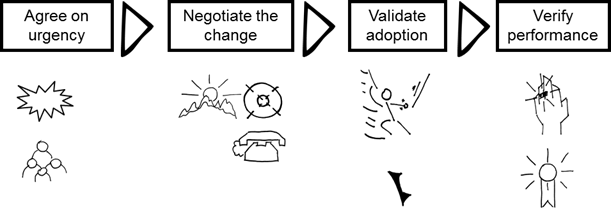

The nature of the experiment will vary depending on where a Minimum Viable Change is within the lifecycle. Here are some examples of improvement experiments that could be part of each of the 4 states within the validated chief lifecycle:

· the online team can identify enough problem statements to provide a clear case for the adoption of agile technical practices after 3 facilitated workshops (agree on urgency)

· the portal technology group can articulate and contextualize a more agile working model after 1 week of brainstorming (negotiate change)

· analysts who are part of the International Teller Program Will tell me that they can perform agile style story analysis after 2 weeks of coaching (validated adoption)

· including developers in detail story analysis will reduce defects by 50% after one month (verify performance)

Regardless of whether you use the rest of the method in its entirety, running an agile adoption or transformation as a set of improvement experiments helps to reframe a change agent activity is something that can be put under constant scrutiny, helping the change agent examine his tactics, and possibly changing his strategy if necessary.

For more check he Lean Change Method: Managing Agile Transformation with Kanban, Kotter, and Lean Startup Thinking

For more check he Lean Change Method: Managing Agile Transformation with Kanban, Kotter, and Lean Startup Thinking

No comments:

Post a Comment